How to Do Database Optimization for High-Traffic Mobile Apps?

Welcome to the realm of mobile applications where overcoming the heavy traffic and immense volumes of data is challenging like juggling a dozen balls at once!

The complexity of keeping the apps functional also rises, especially for a social media app development company due to the huge user base. Users also worry about how to secure mobile apps and enhance their experience with the app.

A database optimization would do that. It’s giving your software a performance and scale boost in the way of improving user experience. This has also been a solution for your query on how to improve mobile app performance.

We are now going further into the crucial part of this guide which is database optimization in the case of popular mobile apps. We will cover the issues associated with large data sets, perform queries faster, and eliminate any annoying bottlenecks that might happen.

It’s high time to release your app to the world and watch users swiping through your digital universe with this big grin on their faces.

Foundational Pillars of Database Optimization

Database optimization is founded on several pillars equally crucial for performance, scalability, and efficiency. Below are the key pillars.

1. Normalization

Normalization comes down to arranging data into meaningful tables and eliminating data duplication.

The main objective of normalization is to avoid redundancy of data and eliminate dependency, in this way data integrity is improved and the risk of anomalies during data manipulation is minimized.

First Normal Form (1NF): Columns of 1NF tables must contain atomic values that cannot be divided. Besides, the range of columns should be different, and each row should be unique. This concept rules out the storing of multiple values within a single cell and thus it produces the output in the tabular format.

Second Normal Form (2NF): 2NF extends 1NF by ensuring that all non-key attributes are fully functionally dependent on the complete primary key. This implies that each non-key attribute is dependent on the whole primary key and not just a part of it. It cancels out partial dependencies and removes even more duplications of the same data.

Third Normal Form (3NF): 3NF is one more step in the normalization process to eliminate the transitive dependency. This means, however, that all non-key attributes must be dependent only on the primary key and not on the other non-key attributes. It facilitates eliminating the repetition of data by removing the implied relations between attributes.

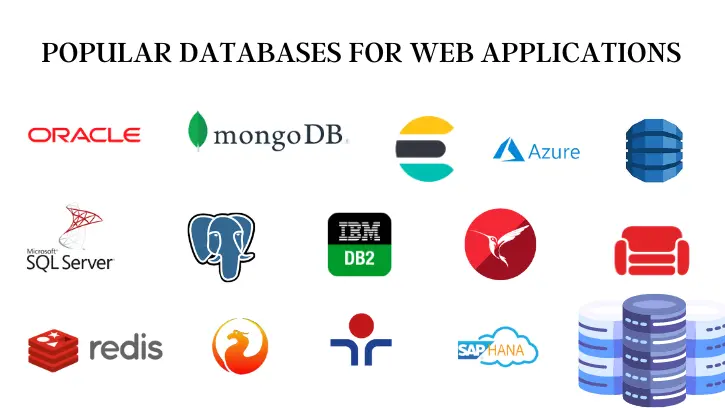

2. Selecting Database System

When making an appropriate database choice, it is vital to consider the performance of relational databases like PostgreSQL, MySQL, and SQL Server against NoSQL alternatives like MongoDB and Cassandra. The choice is majorly determined by the data structure you have and the kind of your queries.

Popular Relational Database

Relational databases (PostgreSQL, MySQL, and SQL Server)

Structured Data: One of the great features of relational databases is to manage structured data with well-defined schemas. If your data is structured and you have to build up multiple relationships between entities, relational databases are the best choice.

ACID Compliance: ACID (Atomicity, Consistency, Isolation, Durability) properties these systems guarantee ensure data integrity and consistency of transactions. This condition gives them an edge in data integrity applications, which are vital in fields like banking systems or e-commerce platforms.

SQL Support: Relational databases use SQL (Structured Query Language) which is a powerful and universal language for working with personal data. If your team is familiar with SQL or if your application requires to be able to perform complex querying, relations databases are appropriate.

Source: https://www.taffinc.com/

NoSQL Options (MongoDB, Cassandra)

Flexible Schema: While relational databases like MySQL, Oracle, and PostgreSQL have a fixed structure with predefined schemas, NoSQL databases like MongoDB and Cassandra offer flexible schemas to work with semi-structured and unstructured data. Such flexibility is very helpful in positions with shifting requirements or very diverse data.

Scalability: NoSQL databases are designed to perform horizontally, which establishes that they are ideal for managing a large number of data points and high-volume workloads. MongoDB and Cassandra are examples specifically popular for their distributed architecture and hassle-free scaling across nodes.

Query Patterns: NoSQL databases, which are already optimized for particular query styles, for example, key-value lookups (MongoDB), document-based queries (Cassandra), and wide-column queries, are the most preferable ones. For main applications where data is stored in key-value or document form, NoSQL databases outperform relational databases in terms of performance and scalability.

When you select between relational databases and NoSQL, consider what your data is, what your querying pattern is, and how scalable you need your system to be.

Relational databases are designed for dealing with structured data and complex query processing, but NoSQL databases are tailored to offer flexibility, scalability, and optimized query patterns.

Finally, the decision that should be made is based on the specific needs and limitations of your project.

3. Distributed Databases

It stands for a distributed database architecture in which data is distributed across multiple servers or nodes in contrast to being stored on a single server. The handling of very large data volumes is the reason why the approach was discovered.

Massive Volumes of Data: In a lot of them, you will see a real increase in the amount of data generated and stored, especially in web services of the latest generation. Such a huge amount of information can overload a server’s capabilities to store it, work on it, and manage it properly.

Scalability: Distributed databases solve the problem of how to deal with huge volumes of data by distributing the workload across various servers or nodes. The distributed nature of the system allows for data and processing load to be shared, making it possible to scale horizontally by adding more servers whenever needed to handle increasing volumes of data and user demands.

Load Distribution: Distributed databases distribute data and tasks related to processing among multiple servers, thus avoiding a single server becoming a bottleneck. Each server in the distributed system has part of the data and processing workload and hence collectively they together manage the data of the database as well as the user requests effectively.

Fault Tolerance: Distributed databases often provide redundancy and tolerance to faults to render data availability and reliability. In the case of a server failure or network interruption, the distributed system can run without interruption as all actions are re-directed to the available servers, and data consistency is preserved.

How to Do Database Optimization?

The fundamental optimizing methods form the foundation of database optimization. This can help businesses to exploit the whole capacity of their data infrastructure.

One kind of solution is indexing, while another is partitioning, and every method supports separate operations to eliminate bottlenecks and boost performance.

The main topic that we will focus on is the core optimization principles that are used in database management. We explore the contribution of each method in boosting the performance, scalability, and general effectiveness of an app.

Being proficient in the mentioned optimization strategies companies can efficiently use the databases to stimulate innovation, agility, and competitive advantage in the present data-powered environment.

Indexing

Indexing is a database optimization technique that is very important, and it improves the performance of query and retrieval of data greatly.

By properly designing and maintaining the database’s indexes according to query patterns and performance considerations, database administrators can ensure a balance between the advantages and disadvantages of indexing.

Benefits of Proper Indexing

Improved Query Performance: A column indexed appropriately will help the SELECT queries operate considerably faster, leaving the fetching process shorter for the user.

Reduction in Full-Table Scans: Databases can quickly access necessary rows without scanning the entire table because of indexes, consequently the data retrieval is faster.

Enhanced Efficiency: The well-designed indexes reduce the data access and retrieval operations using the faster database, which leads to higher database efficiency.

Considerations for Indexing

Column Selection: Deciding which column we will index is important. Columns which are often used in WHERE conditions, JOINs, and ORDER BY clauses are good index candidates.

Query Patterns: Identifying the types of queries which are run on the database, and which columns to index them for will improve performance.

Balancing Act: Indexing can potentially boost query performance, but adding too many indexes won’t be a good idea because it causes additional storage costs and slower write operations. Finding the right balance should not be underestimated.

Query Optimization

Optimization of query is the process of improving the performance and efficiency of database queries. It encompasses the process of examining requests and their execution plans to find bottlenecks and inefficiencies and suggest and apply strategies to enhance query performance.

Benefits of Query Optimization

Improved Performance: Syntax-optimized queries take less time to answer, hence improved system performance and reduced wait time for users.

Enhanced Scalability: Effective queries take up fewer resources, thus, the system can manage more parallel requests with no performance impact.

Reduced Resource Consumption: Tuned queries utilize fewer cycles of CPU, memory, and disk I/O, which results in a decrease in resource use and operational cost.

Better User Experience: Faster query runtime makes for a seamless and interactive user experience which leads to a more satisfied and engaged audience.

Lower Latency: Precise queries improve latency by decreasing the time needed to obtain and handle the data and as well generate quicker response times for users and applications.

Tools for Query Optimization

EXPLAIN: Generates a query execution plan with detailed database operations described, which allows for locating of performance congestion and improves the query execution plan.

ANALYZE: Hosts statistics about table and index usage which can help observe the distribution of data and query performance and consequently be used to identify areas of optimization.

Database Profilers: Keep track of database activity such as slow queries, resource-demanding operations, and performance bottlenecks to customize optimizations.

Query Tuning Advisors: Offer automated advice on query optimization through index improvement, query rewriting profile, and configuration change.

Query Plan Visualizers: Pictorially display the query execution plans to emphasize the performance-critical operations and optimization chances to facilitate the MRO.

Scaling for Growth

Scaling for growth implies growing the system’s capability to handle a bigger load. This can be done with the help of three different ways, vertical scaling, horizontal scaling, and replication.

Each workload scaling technique has its advantages and drawbacks which organizations have to thoughtfully analyze and select to match their growing infrastructure.

Vertical Scaling

Meaning: Vertical scaling, which is also referred to as scaling up, entails increasing the capacity of one server by adding more CPU, memory, or storage. It commonly refers to using a more powerful server.

Inherent Limitations: Vertical scaling has fundamental problems; it meets with unavoidable obstacles when the limit is over and it becomes expensive or unreasonable. Furthermore, a single server turns out to be a single point of failure and this situation may lead to downtime and loss of data.

Horizontal Scaling (Sharding)

Data Distribution: Horizontal scaling, which is scaling out, involves distributing data among several servers or nodes. Sharding is one of the widespread methods of horizontal scaling, which involves data partitioning with each chunk placed on a different shard and residing on a separate server.

Complexity Involved: Sharding due to this complexity introduces managing data distribution, maintaining data consistency across shards, and rebalancing shards when the system grows. The implementation and maintenance of the program also need meticulous planning and coordination.

Improving Throughput: On the other hand, horizontal scaling addresses this issue by distributing the workload across several servers, as a result, the system can handle more concurrent requests without causing any server overloading.

Replication

Distributing Read Traffic: Ensuring high performance in read-intensive situations, replication is commonly used to build replicas of the database. Read replicas to load off read queries from the primary database reducing the load on the primary server and contributing to scalability.

Benefits for High-Read Scenarios: Recreation makes replication scalable and allows applications to horizontally scale read requests across copies. It lets applications deal with a larger number of reads without affecting the consistency of writes.

Failover and High Availability: Replication enables failover as well as high availability by ensuring the copies of the data are in two different locations. In the case of a primary database failure, the replica becomes the main one and continues to serve, so that downtime is minimized and service is uninterrupted.

Advanced Considerations

The advanced and innovative features that are available to optimize the databases are discussed next.

Caching

In-Memory Caching Role: The fast retrieval of data is enabled by in-memory caching solutions such as Memcached and Redis which store frequently accessed data and the resultant database lookups.

Best Practices: This is achieved via the identification of hot data, setting suitable expiration policies, and putting in place cache invalidation mechanisms that guarantee data consistency and allow the maximum cache hit rates.

Data Compression

Usage and Benefits: Compression alleviates the disk I/O problem which is caused by storing data in a compressed format that saves storage space and speeds up data transfer rate. It’s a good idea for big, repetitive datasets, where the advantage is bigger than the caused state.

Potential Trade-offs: Although compression gives storage and IO advantages, it can cause CPU overhead at the compression and decompression time. Appropriate management of CPU resources and data access will be useful to eliminate any possibility of tradeoffs.

Data Partitioning

Efficiency Enhancement: Data splitting is the process of splitting a large table into logical units based on certain criteria such as range and hash functions. Efficiency improves overall by sharing data stored across many storage devices or servers, parallel processing and thus relieving the contention.

Optimal Implementation: Successful partitioning techniques take into account query patterns, access frequency, and maintenance overhead to achieve a balanced data distribution and optimized query performance.

Offloading Analytics

Separate Databases or Data Warehouses: Shifting analytics to traditional databases or data warehouses that are independent of transactional ones decouples analytical workloads from transactional ones, eliminating resource contention and, consequently, ensuring stable performance for both of them.

Benefits: Dedicated analytical environments comprise hardware and software tools optimized for complex reporting and analysis from vast datasets while preventing any downtime to transactional systems.

Performance Metrics and Monitoring

Performance metrics and monitoring are ongoing processes in which the database system should be aligned with evolving business needs and performance expectations.

Key Performance Metrics

Throughput: It measures the number of transactions or queries processed per unit of time, which implies the throughput capability of the database.

Latency: It is the time taken for a query or transaction to be executed possibly depending on the response of the database.

Concurrency: This represents the number of concurrent operations or query loads that a database can process per unit of time without impairing its speed.

Resource Utilization: Tracks CPU, memory, disk I/O, and network utilization to observe resource congestion and optimize resource allocation.

Tools for Performance Monitoring

Database Management Systems (DBMS) Tools: Most database management systems provide monitoring tools or management consoles that allow to gathering of actual database performance metrics in real time.

Third-Party Monitoring Solutions: Database performance monitoring tools supplied by third parties have been developed to provide advanced analytics, alerting systems as well customized dashboards.

Importance of Performance Monitoring

Early Detection of Issues: During the performance monitoring, issues such as slow queries, resource congestion, or configuration problems will be detected before they become critical failures.

Optimization Opportunities: Through studies of performance metrics, administrators can reveal opportunities for the optimization, fine-tuning of configurations, and implementation of the best practices to raise the efficiency of the database.

Capacity Planning: The monitoring of performance metrics over time allows capacity planning to be done and the administrators to anticipate the future needs of resources to scale the infrastructure proactively.

Best Practices for Monitoring

Define Clear Objectives: Set clear performance goals and metrics that are appropriate for the resources needed and the expectations of the application users.

Regular Monitoring: You should be able to monitor performance metrics in real-time, and collect historical data to identify trends, anomalies, and performance degradation.

Alerting and Notification: Establish alert systems that will inform managers of critical incidents or statistics that go beyond a standard deviation.

Root Cause Analysis: When performance issues arise, do a comprehensive root cause analysis to find the factors that made the problem possible in the first place and introduce actions to solve this.

Database Optimization as the Never-Ending Cycle of Iteration.

The Iterative Process of Optimization is a systematic technique of enhancing the efficiency, effectiveness, and productivity of a system or process through cycling processes repetitively. This repetitive technique facilitates continuous learning, adapting to feedback and performance metrics, which in the end brings about incrementation as well as advancements.

1. Evaluation and Analysis

Assess Current Performance: Evaluate the performance of the system by analyzing performance metrics, bottlenecks, and limits of the system.

Gather Feedback: Pursue feedback from users, developers, and stakeholders to know what gives them a hard time, performance expectations, and what should be improved.

2. Achieving goals and Prioritization

Define Optimization Goals: Establish the objectives and performance indicators reflecting the evaluation results and suggestions.

Prioritize Optimization Efforts: Pick up the main performance issues and put the optimization efforts in order according to their impact on user experience, system stability, and business objectives.

3. Strategy Development

Identify Optimization Strategies: Brainstorm and research the optimization methods, techniques, and best practices that are used for solving those performance issues.

Tailor Strategies to Goals: Tune optimization strategies to be in line with the outlined goals, primary concerns, and features of the database’s system.

4. Implementation and Testing

Execute Optimization Plans: Run optimization techniques, changes, and performance tuning options in the database environment.

Testing and Validation: Make sure that the testing and validation of the degree to which adopted optimizations have realized the expected improvements in performance and operational efficiency are done on a rigorous basis.

5. Monitoring and Measurement

Monitor Performance Metrics: Find out the impact of the database performance by monitoring critical performance metrics and indicators after applying the optimizations.

Measure Results: Determine optimization level success by comparing the results with the set goals and performance parameters, highlighting the pros and cons of the ongoing process.

6. Feedback and Adjustment

Gather Feedback: Collect feedback from users, coders and system administrators who are the main stakeholders regarding the effect of the optimization approach on system performance and user experience.

Iterative Adjustment: Taking into account the results of feedback and performance monitoring, apply successive corrections to optimization strategies and configurations that fine-tune database functioning and, in the process, solve the remaining problems as a result of these changes.

7. Continuous Improvement

Iterative Cycle: Optimization consists of a continuous process of evaluation, implementation, testing, and adjustment.

Continuous Learning: Apply the data learned in each step of database optimization and add remarks on what has been learned so far to ensure performance improvement in every optimization phase.

Conclusion

Summarizing the database optimization for a mobile application with high traffic aiming at the users’ satisfaction and sustainability in performance. Strategic indexing, complex query optimization, cache, and data compression techniques are very helpful for databases to process large amounts of data quickly.

Scaling techniques like sharding (horizontal) shed additional light on scalability, so real-time monitoring serves for continuous performance optimization. Database optimization and iterative refinement become possible as the demands of a site with high mobile traffic are tackled.

Nevertheless, a precisely tailored database structure is not only the guarantee for tying things up with each other but also the beginning of a growing mobile application market.